ReadyBoost–Robbie’s Benchmark

2012-04-14

Decheng (Robbie) Fan

Background: I was not confident about Windows Vista ReadyBoost. I always thought it didn’t meet my expectation, because my rough benchmark showed that it is quite ineffective after using a memory hog program to clear out processes’ working sets. However, this time I understood better about it and I think although it didn’t seem to be as good as Windows 7 ReadyBoost, it still has its effect.

Configuration: A laptop computer, with a 120GB 5400RPM hard disk drive, which has 50MB/s sequential read speed. Intel Core 2 Duo CPU at 800MHz (power-saving mode). 3GB RAM. 4GB ReadyBoost cache on a Kingston 16GB USB thumb drive, which has 20MB/s sequential read speed and 7MB/s sequential write speed.

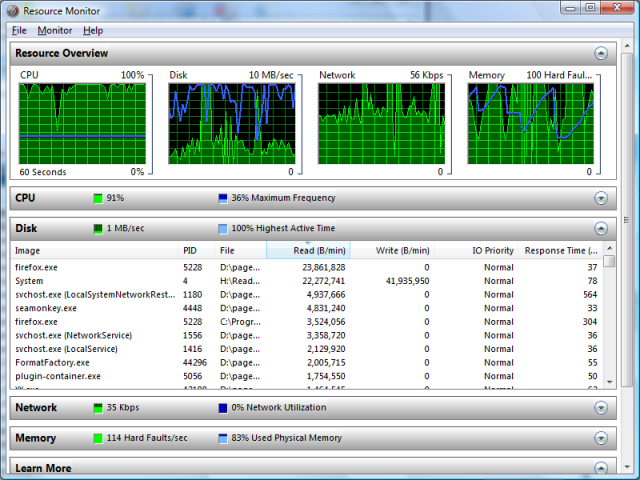

Benchmark method: I use 2x1GB memory hog processes. Each memory hog program essentially allocates 1GB memory, touches every page in it, and deallocates the memory. They are first set to repeated launch. After several times (20~30) of repetition, I close the memory hogs and begin recording the time. Then wait until disk activity becomes normal (average disk time <= 30% as seen in Resource Monitor or cached >= 300MB as seen in Task Manager) and stop recording time.

Result:

- Without ReadyBoost: 155s

- ReadyBoost without much pre-population: 119s

- ReadyBoost with enough pre-population: 35s

Explanation: Windows implements the concept of process working sets. A process has its 4GB (or 16TB on x64) virtual address space partially mapped as 4KB memory pages. Windows keeps tracking pages that are recently in use and that are less recently in use and tries to keep as much recently-used pages in RAM as possible. Less-recently used pages are paged out to the hard disk drive. Note that the virtual address space may contain anonymous memory (such as global variables in a C program and memory allocated by “malloc”), which is backed by the paging file, and portions of memory-mapped file (including executable file text). As long as a page is modified since last time it is loaded into the RAM (or if it is a newly-allocated piece of memory that never existed on the disk), a page-out would involve writing to the page file or to the memory-mapped file; otherwise it is a no-op.

Whenever a process accesses a page that is not in the RAM, a page-in operation is required. Because pages previously paged out are based on usage pattern, they are probably scattered around the address space (especially considering dynamic memory allocation). A minor issue is that sometimes the free space inside the paging file is not contiguous. Due to the two issues, paging in may involve many, quite random, reads. Now we come to a performance bottleneck of the contemporary hard disk drive: seek time + rotation time. Every random read requires a disk seek and rotation, and it is about 10~20ms (milliseconds) on a hard disk drive. Even with a 7200RPM drive, it is still above 5ms. Windows can optimize page-in of contiguous pages by reading ahead (in the page file) several pages at a time. Suppose in the worst case, every page-in read-ahead doesn’t make any hit, with 10ms per read, it would be 400KB per second. Usually it is better, but from what I see in Resource Monitor, the average speed is below 2MB per second.

On the other hand, the USB thumb drive, being made of flash memory, has much faster random read rate, at about 1ms per read (although still much slower than RAM). So we can hopefully use it to accelerate page-ins. However, it cannot accelerate page-outs very much, because its sequential write speed is just about 5~10MB/s. Since it’s removable, and the quality varies between different brands and models, it’s not an ideal replacement for the paging file. So ReadyBoost uses it as a speed booster, but not as a place for the paging file.

Windows Vista’s approach to utilizing the flash memory device comes with two parts, Superfetch and ReadyBoost. There are two ways the ReadyBoost cache is filled: populated by Superfetch, or storing page-outs and disk writes. Superfetch population consists of the major part of data in the ReadyBoost cache. Superfetch is a technology that “predicts” pages that will be used in the future. As an implementation of a theory from Microsoft Research, it applies AI in access pattern analysis, having a result of keeping pages in RAM or in flash memory sorted by the likelihood of access to them in the future. Pages that are more likely to be accessed in the future are put in RAM, while those that are less likely to be accessed are put in flash memory.

As you might know, flash memory devices have a low speed for random writes. This is because every time an address is written to, the whole flash block (sometimes as large as 2MB) need to be read (backed up), wiped and written. In order for ReadyBoost to write to the flash memory device efficiently, it has to buffer and serialize the write as much as possible. As the pages being recorded are quite random, the read from the device would also be random, but this is exactly where the strength of flash memory lies, so it is not a real problem. ReadyBoost pre-tests the flash memory device as soon as it gets plugged in, and if the 4KB random read speed is above 2.5MB/s and the 512KB random write speed is above 1.75MB/s, it is regarded as suitable for acceleration. ReadyBoost uses compression in order to further utilize the space in the cache.

My benchmark showed how ReadyBoost cache can be well-populated in case of repeated memory hog programs. The definition of well-populated is: frequently-used pages are in the cache. My explanation to the benchmark result is that: upon first launch without memory hog, suppose there are 1.4GB working sets in RAM, the other 1.6GB of RAM will contain pages less likely to be accessed. The ReadyBoost cache will be populated with pages that are less likely to be accessed than those in the 1.6GB RAM. So, if my memory hog program drives the 1.6GB cache and 0.4GB working sets out of RAM, ReadyBoost is unlikely to help when these pages are getting paged in.

However, when memory hog programs repeatedly launch, two things happen. Firstly, Superfetch will randomly meet situations that pages cannot be prefetched into RAM, when memory hogs dominate the space. Secondly, periodically the 0.4GB previous working set pages will be out of RAM, so Superfetch will try to prefetch them. Then, the 0.4GB has a certain chance to be stored into ReadyBoost cache. Thus, after enough Superfetch prefetching, they are quite likely in the ReadyBoost cache and can be quickly loaded from it, rather than be slowly read from the hard disk drive. This is an explanation to my benchmark result.

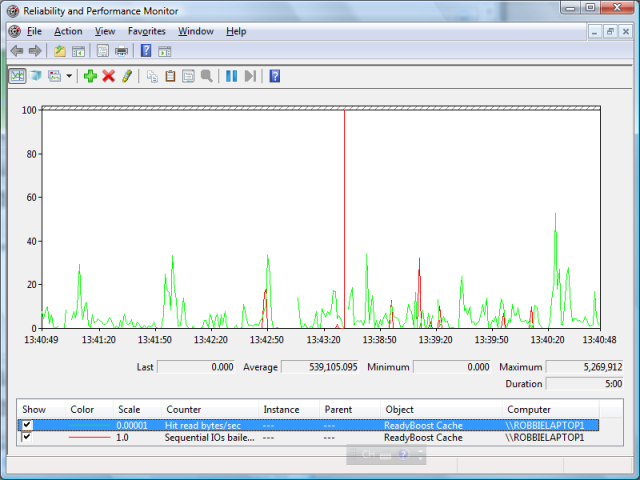

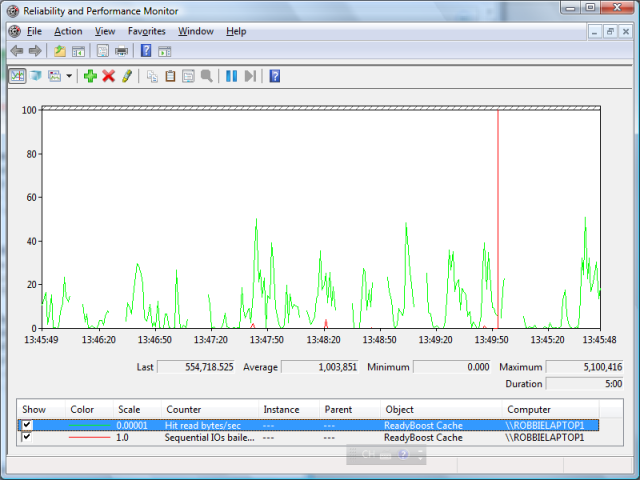

After my prolonged use of ReadyBoost and looking at its performance graph (Hit read bytes/sec and Sequential IOs bailed/sec), a small thing to mention is that ReadyBoost, or strictly speaking, Superfetch, doesn’t prefetch “data maps”, that is, file system structures (MFT, directories (file names), etc.). Such data is only cached in RAM by the Cache Manager.

As a conclusion, my suggestion on how to use ReadyBoost well: when RAM becomes tight, buy more RAM sticks and plug into your machine if possible, and then apply ReadyBoost with a fast USB thumb drive (directly plug in to a USB port rather than to use a connection wire or hub). Try not to put too much different kinds of data into RAM. Use ReadyBoost when your laptop is on battery power, and it will save you energy. If your computer has a Solid State Drive, forget about ReadyBoost and Superfetch.